Alexis Lanternier, the CEO of Deezer, recently released an official statement. He revealed that an average of 75,000 AI-generated tracks are uploaded to the platform every day, accounting for 44% of all uploads.

This is a very important data point. Why? Because it essentially validates the thesis we’ve been advocating. 75,000 AI-generated songs per day means over 2 million per month. But how many of these tracks—or the AI-native “artists” behind them—actually evolve into real, professional music brands? That’s exactly where the problem lies.

That said, I’m not going to turn this into a RockAgent thesis piece. We’ve written enough of those over the past five months. The real question I want to focus on is this: when AI-driven music production reaches this level of intensity, what actually creates differentiation? I have a theory about this. I call it the “Rick Rubin Model.”

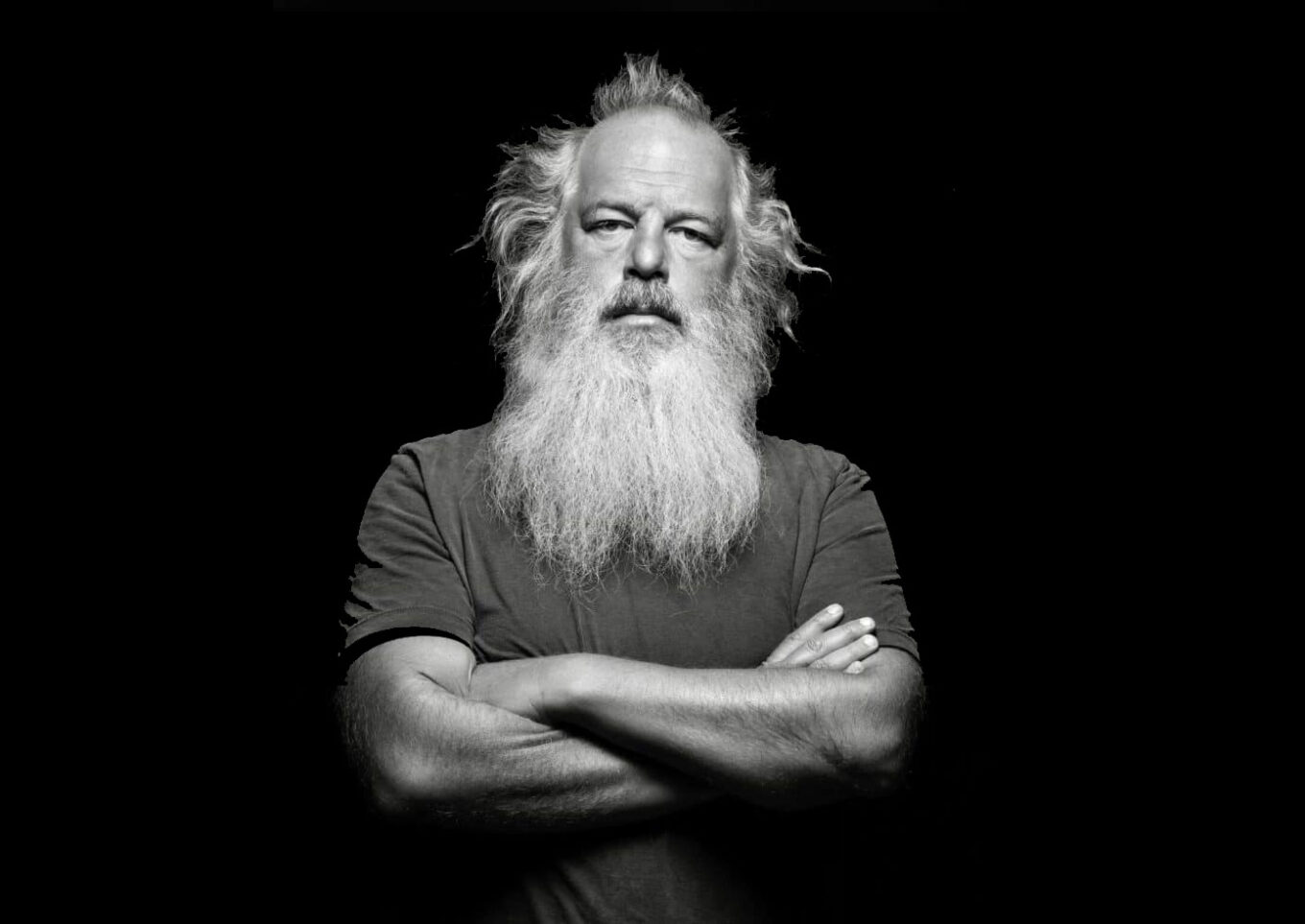

So who is Rick Rubin?

He’s the producer behind some of the most important bands in history—Slayer, Red Hot Chili Peppers, and Metallica. But here’s the interesting part: he doesn’t have formal musical knowledge, doesn’t play an instrument, and doesn’t use any editing or mixing tools. All he does is listen to what artists create and make intuitive decisions about which version is better.

Rubin worked with some of the greatest bands of all time. By simply listening, he guided their music in the right direction. He identified what needed to be cut, what needed to be emphasized, and what needed to be added. He had a distinctive sense of taste. You could call it “taste” in the purest sense—like a curator. He approached music like a connoisseur, constantly trying to distinguish between what is good and what is not. And artists trusted that taste.

With AI, music production is now accessible to everyone. Tools like Suno, Udio, and Minimax allow anyone to generate music. At this point, what will create real differentiation is the ability to recognize what is actually good—and the judgment required to get there. In other words: taste.

We’re already starting to see early examples of this. AI-native artists who can build around a consistent concept and turn it into a cohesive album are beginning to stand out. Anatolian Psych Rock Lab is, in my view, one of the most striking examples.

At this point, it’s no longer about producing music. It’s about having the taste and the ear to recognize which music will actually be listened to—and then packaging it into a consistent, professional form and bringing it to the world.